CUDA works with all Nvidia GPUs from the G8x series onwards, including GeForce, Quadro and the Tesla line. Mac OS X support was later added in version 2.0, which supersedes the beta released February 14, 2008. The initial CUDA SDK was made public on 15 February 2007, for Microsoft Windows and Linux. ĬUDA provides both a low level API (CUDA Driver API, non single-source) and a higher level API (CUDA Runtime API, single-source). CUDA has also been used to accelerate non-graphical applications in computational biology, cryptography and other fields by an order of magnitude or more. In the computer game industry, GPUs are used for graphics rendering, and for game physics calculations (physical effects such as debris, smoke, fire, fluids) examples include PhysX and Bullet. Third party wrappers are also available for Python, Perl, Fortran, Java, Ruby, Lua, Common Lisp, Haskell, R, MATLAB, IDL, Julia, and native support in Mathematica. In addition to libraries, compiler directives, CUDA C/C++ and CUDA Fortran, the CUDA platform supports other computational interfaces, including the Khronos Group's OpenCL, Microsoft's DirectCompute, OpenGL Compute Shader and C++ AMP. Fortran programmers can use 'CUDA Fortran', compiled with the PGI CUDA Fortran compiler from The Portland Group. C/C++ programmers can use 'CUDA C/C++', compiled to PTX with nvcc, Nvidia's LLVM-based C/C++ compiler, or by clang itself. The CUDA platform is accessible to software developers through CUDA-accelerated libraries, compiler directives such as OpenACC, and extensions to industry-standard programming languages including C, C++ and Fortran. Copy the resulting data from GPU memory to main memory.GPU's CUDA cores execute the kernel in parallel.Copy data from main memory to GPU memory."SP", "streaming processor", "cuda core", but these names are now deprecated)Īnalogous to individual scalar ops within a vector op

Simultaneous call of the same subroutine on many processors The following table offers a non-exact description for the ontology of CUDA framework. This design is more effective than general-purpose central processing unit (CPUs) for algorithms in situations where processing large blocks of data is done in parallel, such as: By 2012, GPUs had evolved into highly parallel multi-core systems allowing efficient manipulation of large blocks of data. The graphics processing unit (GPU), as a specialized computer processor, addresses the demands of real-time high-resolution 3D graphics compute-intensive tasks. When it was first introduced, the name was an acronym for Compute Unified Device Architecture, but Nvidia later dropped the common use of the acronym.įurther information: Graphics processing unit CUDA-powered GPUs also support programming frameworks such as OpenMP, OpenACC and OpenCL and HIP by compiling such code to CUDA.ĬUDA was created by Nvidia.

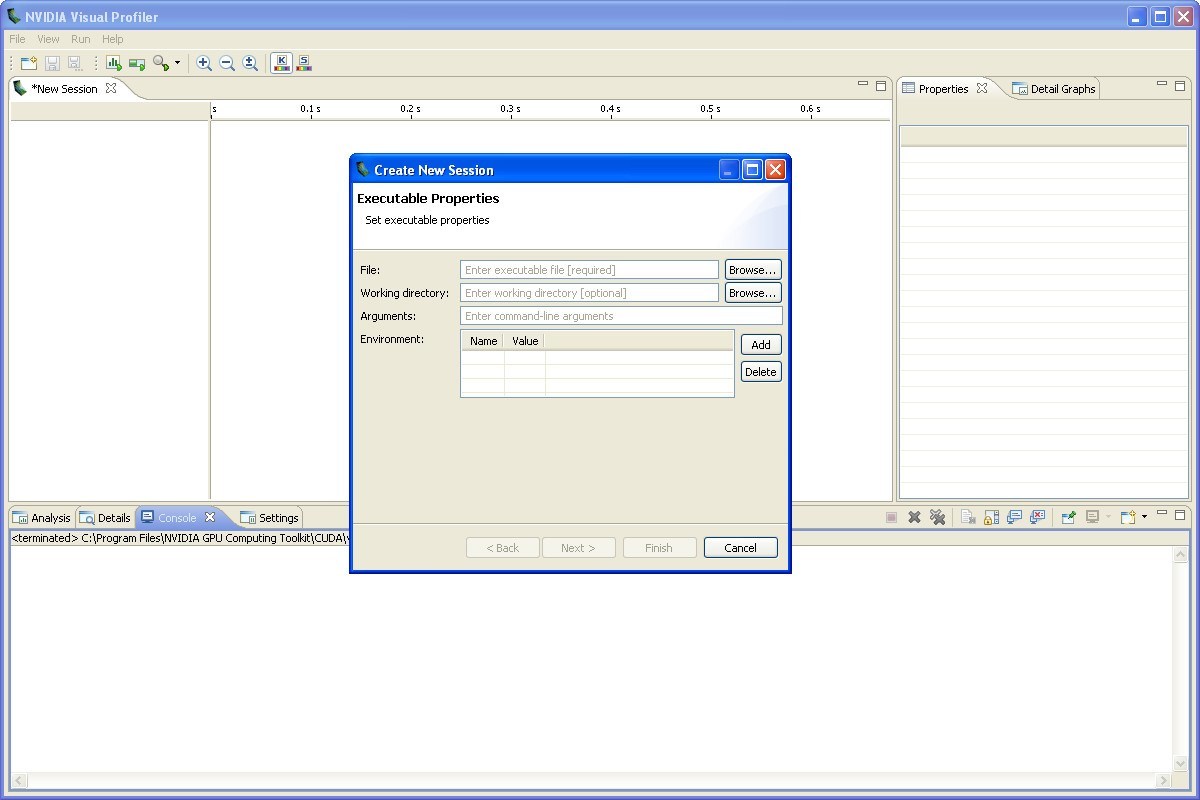

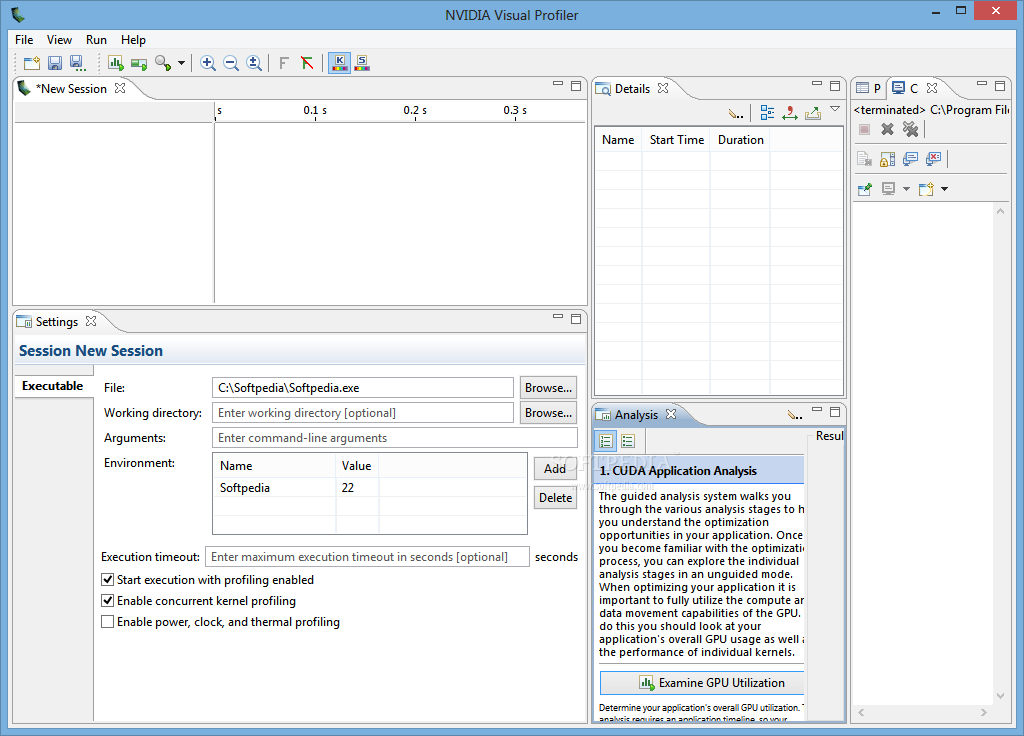

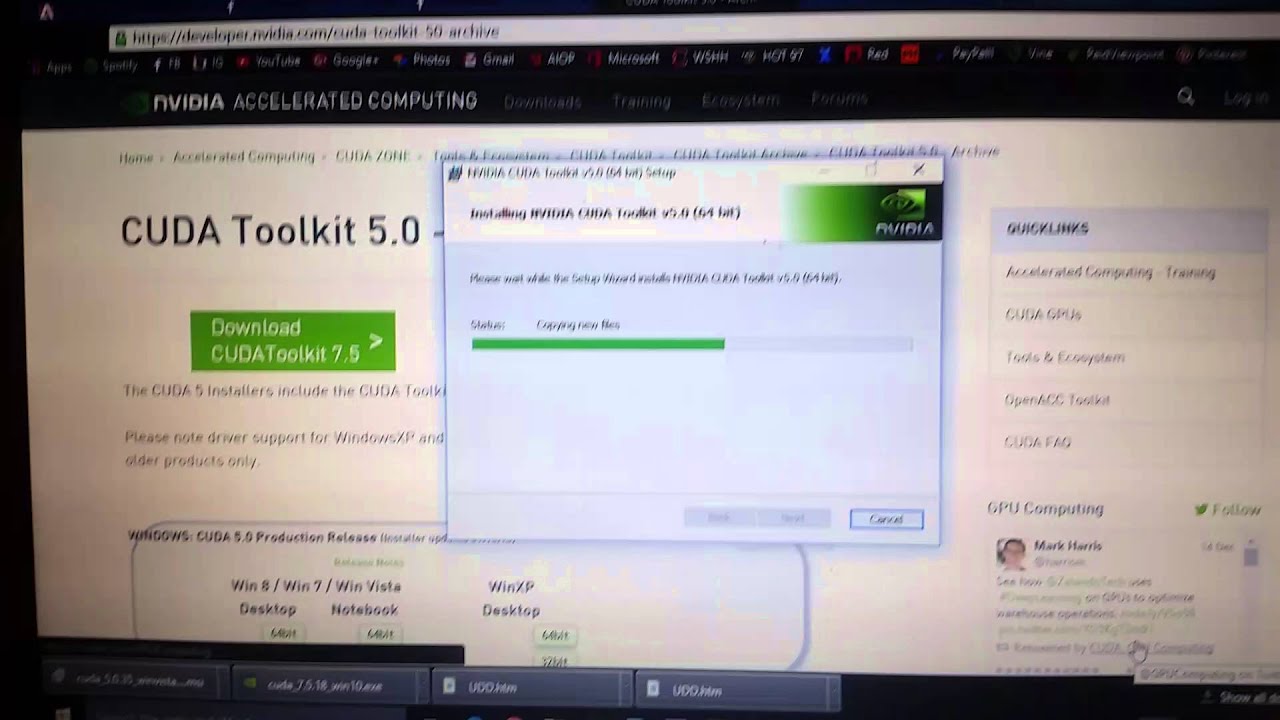

This accessibility makes it easier for specialists in parallel programming to use GPU resources, in contrast to prior APIs like Direct3D and OpenGL, which required advanced skills in graphics programming. ĬUDA is designed to work with programming languages such as C, C++, and Fortran. CUDA is a software layer that gives direct access to the GPU's virtual instruction set and parallel computational elements, for the execution of compute kernels. Submit the job with the following commandįurther information can be obtained from the CUDA Toolkit website.CUDA (or Compute Unified Device Architecture) is a parallel computing platform and application programming interface (API) that allows software to use certain types of graphics processing units (GPUs) for general purpose processing, an approach called general-purpose computing on GPUs ( GPGPU). # Load the module for the specific version Load the desired version of the toolkit module:įollowing the ICHEC tutorial, we compile the CUDA source file matmul.cu using nvcc as follows:Ĭreate the submission script (and name it myjob.sh) for the SLURM Workload Manager and modify it with your parameters Since CUDA takes advantage only of gpus, all CUDA jobs must be submitted to the GpuQ. The following is an example Slurm submission script for allocating 1 node of Kay (40 cores) for 30 minutes, then running a CUDA-compiled tutorial program matmul, which multiplies large matrices using gpu acceleration. Like other jobs on ICHEC systems, CUDA Toolkit jobs must be submitted using a Slurm submission script. The CUDA toolkit is available under the CUDA Toolkit End User License Agreement (see CUDA Toolkit documentation). The NVIDIA CUDA Toolkit provides command-line and graphical tools for building, debugging and optimizing the performance of applications accelerated by NVIDIA GPUs, runtime and math libraries, and documentation including programming guides, user manuals, and API references.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed